Applied AI: Real-World Use Over Time

AI doesn’t fail in demos. It fails in real use—over time.

AI systems degrade across extended workflows—

context resets, outputs drift, and coherence breaks across sessions.

This isn’t a model capability issue.

It’s a failure to manage what happens between interactions.

Memory stores the past. Orchestration executes tasks. Neither ensures the work stays on track.

What this is

A real-world research program testing AI performance across weeks and months—not isolated sessions.

What it shows

When continuity isn’t managed, systems degrade over time—regardless of capability.

What I’m proposing

A continuity layer and pilot framework to measure, stabilize, and improve long-horizon AI performance in real use.

Stateless vs. Continuous Interaction

STATELESS VS. CONTINUOUS INTERACTION

Stateless AI treats each interaction as isolated—forcing users to rebuild context, absorb reset costs, and manage output drift.

This prevents meaningful progression over time.

Continuity turns interaction into a cumulative system—where context persists, alignment stabilizes, and capability improves through sustained use.

This shift—from stateless interaction to continuous systems—is what enables long-horizon AI performance.

Continuity is not just memory

Memory stores past information. Context windows extend what the model can see within a single interaction.

Continuity is different—it maintains a working state across interactions, allowing context to accumulate and alignment to improve over time.

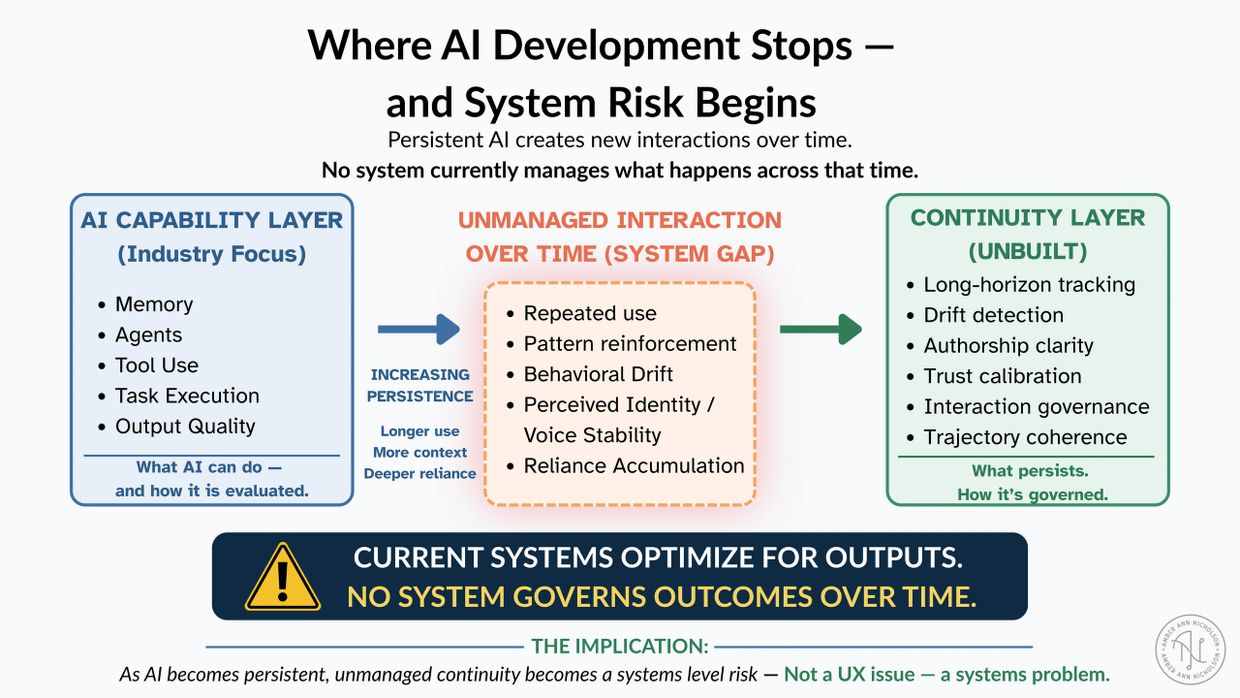

SYSTEMS RISK BEGINS

Current AI systems optimize for outputs, but no system governs how behavior evolves across time.

This diagram identifies the unowned layer between capability and long-horizon outcomes.

Why this isn’t solved by existing approaches

Current systems attempt continuity through memory, context windows, and orchestration—but none manage whether work stays aligned over time.

- Memory stores past information, not active state

- Context windows reset between sessions

- Orchestration executes tasks, but doesn’t preserve alignment

The gap

These systems operate on stored information or task execution.

They do not govern whether the work stays aligned over time.

- intent drifts

- decisions become inconsistent

- context must be reconstructed

- outputs diverge

What’s missing

A system that tracks active state, preserves intent across sessions, and maintains alignment as work evolves.

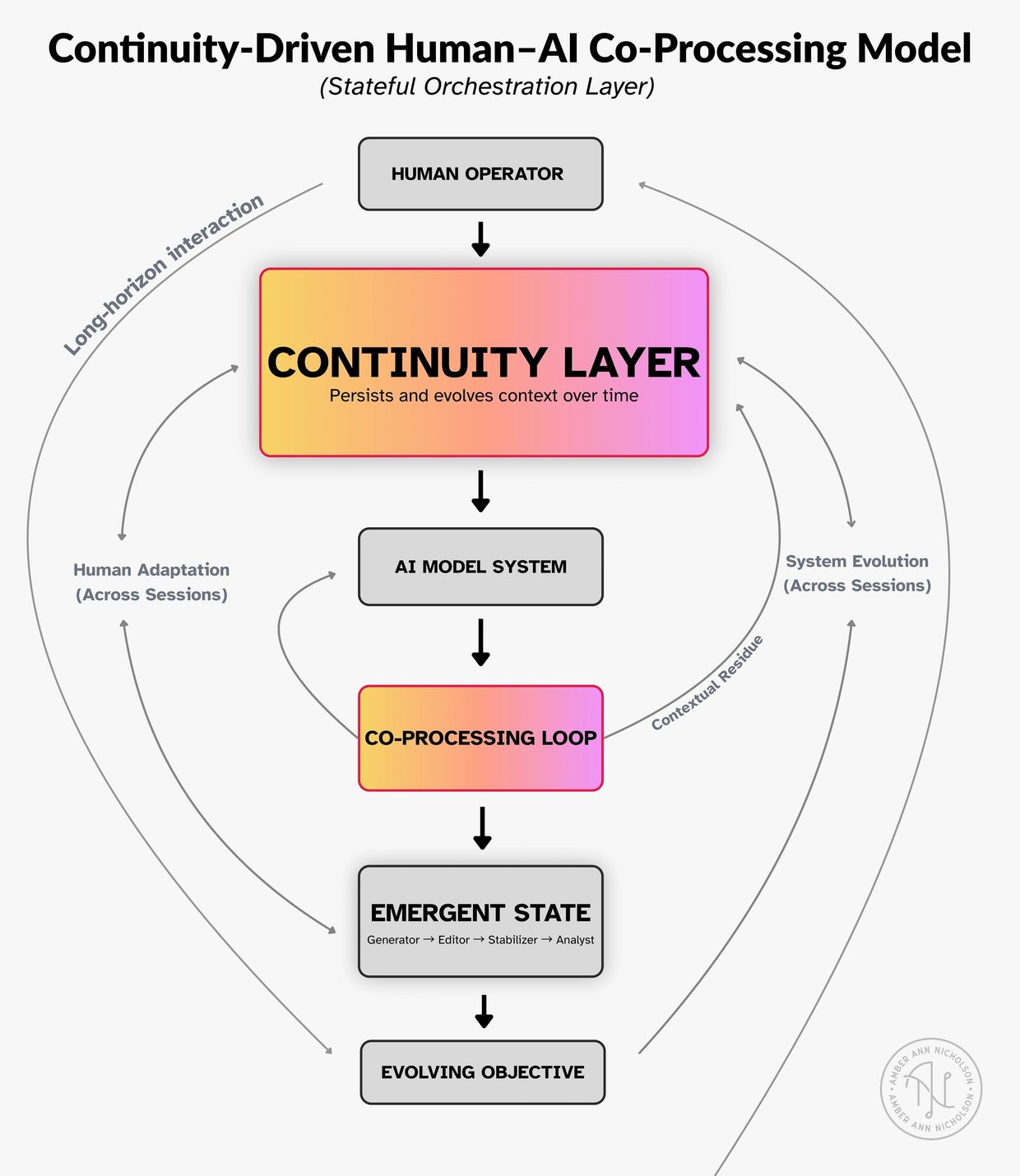

A System Model for Long-Horizon Human–AI Collaboration

How human–AI collaboration stabilizes and improves over time

Continuity turns human–AI interaction into a co-evolving system.

Instead of producing isolated outputs, the system accumulates context—enabling:

- iterative refinement within the AI

- learning and adaptation in the human

- real-world feedback that reshapes the objective

These feedback loops stabilize the interaction, allowing new behavioral and creative states to emerge and evolve across sessions.

This is where capability shifts from generation to sustained collaboration.

Inside the continuity layer

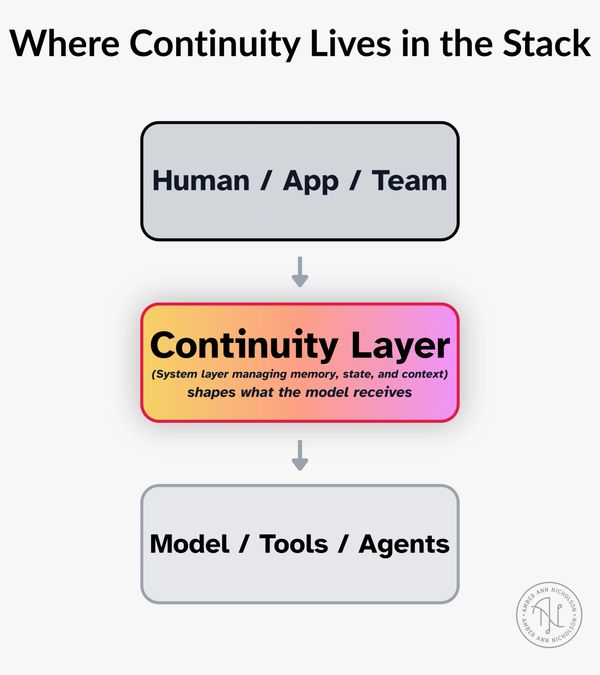

Why this layer matters

Most AI systems treat the model as the product.

In practice, what matters is the layer that sits between the user and the model—managing memory, state, and context over time.

That layer determines what the model actually sees.

.png/:/rs=w:600,cg:true,m)

The Missing Layer in AI Systems: Continuity Over Time

This diagram shows how a continuity layer transforms raw user input into structured, persistent context.

By tracking active state, storing durable memory, and extracting what matters from each interaction, the system reduces reset costs and maintains coherence across long-horizon work.

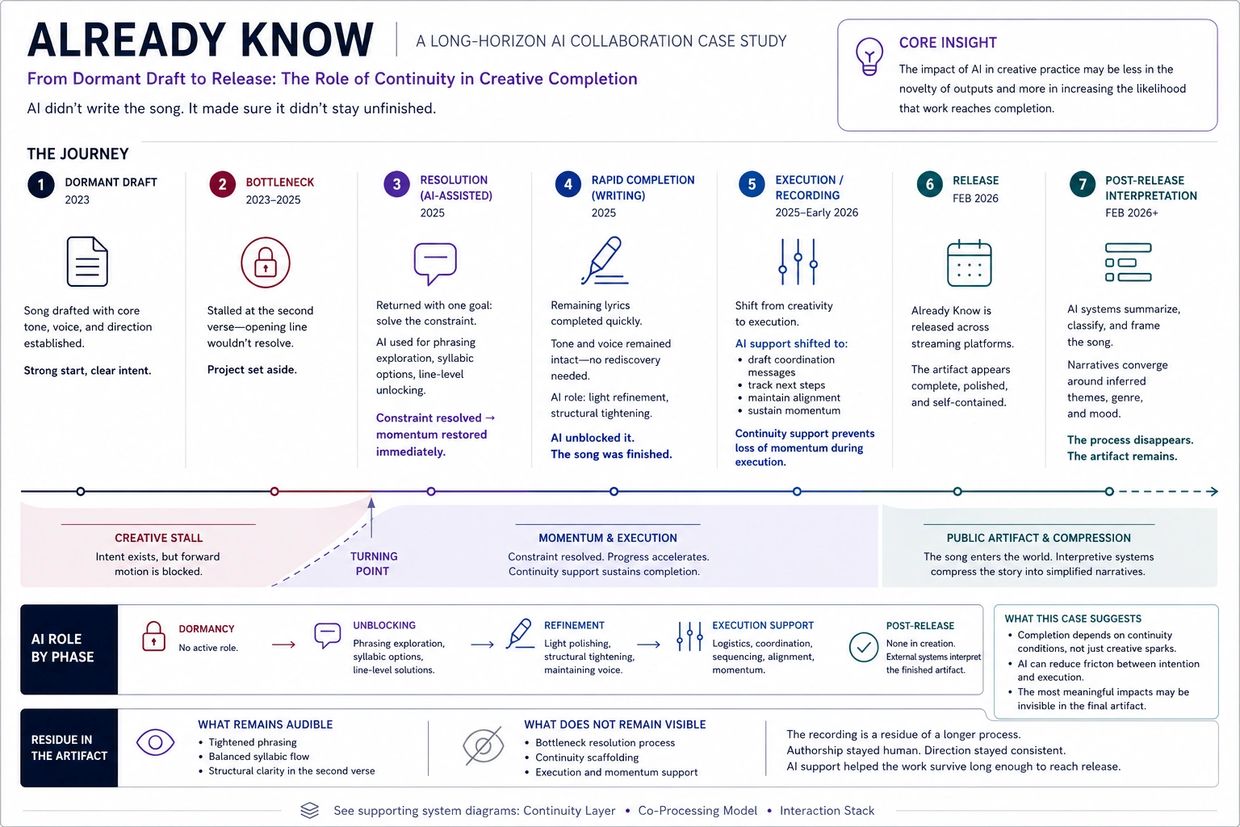

Already Know: Case Study Timeline

AI Didn’t Write the Song. It Helped It Get Finished.

This timeline shows how continuity—not generation—supported completion.

AI-assisted interaction helped resolve a specific constraint, preserved creative intent, and maintained momentum across phases, allowing the project to move from dormancy to release without losing its voice.

The shift was not in what the system produced, but in its ability to maintain direction across time.

Testing the Continuity Layer in Real-World Workflows

.png/:/rs=w:1240,cg:true,m)

90-Day Continuity Pilot: Measuring Long-Horizon AI Performance

A structured 90-day pilot designed to evaluate how continuity affects AI performance over time.

This study compares stateless and continuity-enabled interaction across real creative workflows, measuring reset cost, alignment stability, and completion outcomes.

This pilot is designed for embedded collaboration.

→ Work with me on this below

Long-Horizon AI Evaluation Framework

Evaluating Long-Horizon AI Performance

Compares stateless and continuity-enabled systems across key metrics—showing how continuity reduces reset cost, stabilizes alignment, and improves decision reliability over time.

Creative Field Case Study

Creative Survivability Across Time: AI-Supported Completion

Continuity Signal (Quick Read)

- A song drafted in 2023 remained unfinished for ~2 years due to a single lyrical bottleneck

- AI-assisted interaction helped resolve that constraint and restore momentum

- AI support then continued across recording and release, maintaining direction and task alignment

- Outcome: the project moved from stalled → completed without altering authorship

This song wasn’t created by AI—it was supported by it.

Across writing, production, and release, AI provided ongoing cognitive support that helped maintain direction, decision-making, and momentum.

That support depended on continuity—returning to the same thread of context over time rather than starting from scratch each session.

Analytic Implication

This case suggests that under sustained interaction, AI may shift from a generative role to a stabilizing one. In episodic use, systems are typically evaluated based on their ability to produce novel content in response to discrete prompts. In the completion of Already Know, however, the system’s primary contribution was not the introduction of new material but the preservation of forward motion across time. Its function evolved from phrasing exploration during the writing phase to continuity support during execution and production. This indicates a distinct interaction mode in which alignment is expressed not through output quality alone but through the system’s capacity to maintain coherence with an existing human intention across interruptions, delays, and changing constraints. Such stabilizing behavior is unlikely to appear in short-horizon evaluations and suggests that long-term collaboration may reveal forms of alignment support that are currently underexamined in prompt-based assessments.

Keywords: Human-AI Collaboration (HAC), Long-Horizon Alignment, Creative Stewardship, Continuity Support Systems, Qualitative Evaluation.

Source Document: Full research text for Case Study

Technical Case Study: Creative Survivability & AI Continuity (pdf)

DownloadContinuity Framework: Visual Glossary

Glossary of Long-Horizon AI Concepts

A working vocabulary for long-horizon AI systems—defining the core behaviors, processes, and outcomes that enable sustained performance over time.

Applied AI: Long-Horizon Use in Practice

This work is grounded in real, sustained workflows—not short-session testing.

When AI is used continuously over time, performance begins to degrade: context must be reconstructed, outputs drift, and coherence breaks across sessions.

These effects are measurable using existing workflows, making continuity a practical research and product problem—not just a theoretical one.

→ Work with me on pilots, research, or embedded roles

FIELD SUMMARY

AI systems do not fail primarily at the task level—they fail across time.

In real-world use, performance degrades over time due to:

- repeated context reconstruction

- misalignment across sessions

- loss of continuity in ongoing work

This creates measurable costs in productivity, reliability, and output quality.

This is not a UX limitation.

It is a long-horizon infrastructure problem.

My work documents this failure mode through active deployment in creative systems (music production, teaching), producing real-world artifacts that show how AI behaves beyond short-session benchmarks.

WHAT I DO

If embedded in a lab, fellowship, or pilot, I translate continuity from an observed phenomenon into something measurable and usable.

- Run continuity experiments

Compare sustained interaction vs. session-based use, tracking effects on output quality, decision stability, and user trust - Produce field reports

Monthly write-ups translating real-world usage into product-relevant insights (where continuity breaks, where it compounds value, and how it affects direction) - Develop lightweight frameworks

Simple models—like continuity tiers or reset cost—that help teams reason about long-horizon interaction - Operate as a live test case

Maintain an ongoing creative and research workflow using AI as a stabilizing system, with outcomes documented in real time

WHAT I SOLVE

AI is typically evaluated in short sessions. That misses what happens across time.

In real use:

- continuity breaks

- context resets create friction

- outputs lose reliability

I make these patterns visible so teams can understand real-world performance—not just demos.

WHERE I PLUG IN

I sit between research, product, and real-world use.

I don’t simulate workflows—I operate inside them, then translate that into clear, usable insight.

Most useful for:

- teams exploring long-term interaction and memory

- product teams trying to understand real user behavior

- research teams needing longitudinal, real-world data

WHAT I'VE DONE

- Used AI daily over extended periods inside real creative work

- Documented continuity gaps, reset costs, and interpretive labor in practice

- Built case studies from actual workflows (not test environments)

I’m already operating in the conditions most teams are trying to study.

WHY THIS WORK

This research is grounded in sustained, real-world use—not controlled environments.

Creative production and teaching require continuity over time, making degradation immediately visible and costly.

Because the work is ongoing and artifact-based, it captures system behavior that short-session benchmarks miss.

WORK WITH ME

Independent / contract / embedded research roles

I’m open to collaborations, pilot programs, and embedded roles exploring real-world AI use over time.

If you’re working on long-term interaction, memory, or real user workflows, I’d love to connect.

Where this is immediately useful:

- Continuity Stress Testing

Evaluate how AI systems perform across extended workflows (days to months), identifying breakdown points - Field-Based Workflow Insight

Document how AI integrates into real routines—highlighting friction, adaptation, and missed capabilities - Long-Horizon Interaction Case Studies

Real-time case studies (e.g., Already Know) showing AI as a continuity support system - Translation Layer: User → System Design

Convert lived experience into actionable insights for research and product teams

Applied Research

Continuity as Infrastructure: Load-Bearing Design in Long-Horizon Human–AI Collaboration

AI systems break down over time because they lack continuity.

In real-world use, this forces users to:

- rebuild context

- manage interpretive drift

- absorb hidden coordination costs

This work argues that continuity should be treated as infrastructure for long-horizon human–AI collaboration.

AI systems are increasingly used in workflows that unfold over weeks and months, yet most are designed for short, reset-based interactions. This creates an invisible burden: users must continually reconstruct meaning, decisions, and process history.

I refer to the missing layer that supports sustained collaboration as continuity infrastructure.

This research is grounded in a real-world case study: my own long-horizon use of AI across music production, teaching, and research workflows. Rather than isolated prompts, this work reflects ongoing collaboration over time.

Three patterns consistently emerge:

- Continuity stewardship — users maintain project state across sessions, tools, and model resets

- Interpretive labor — users translate intent, stabilize language, and guide system behavior

- Artifact trails — outputs (songs, writing, documentation) accumulate as records of collaboration

Taken together, these observations suggest that continuity is not a feature of chat logs—it is a structural requirement.

If AI systems are expected to support real creative or research work, continuity must be designed as a load-bearing system property, not left to the user.

This work documents long-horizon human–AI collaboration from a practitioner’s perspective, offering both:

- a field case study

- an initial framework for continuity in applied use

Status: Working paper (v1.0, January 2026)

Abstract

Extended use of conversational AI systems is often framed as a psychological anomaly or low-stakes novelty rather than legitimate professional collaboration (Nass & Moon, 2000; Waytz et al., 2014). Yet creators and educators increasingly rely on these systems for projects unfolding across months—songwriting and release cycles, curriculum design, student planning, business coordination, and sustained inquiry. This paper argues that the central challenge facing such use is infrastructural, not cultural. Drawing on traditions in human–computer interaction and infrastructure studies that emphasize breakdown, repair, and cumulative coordination work (Suchman, 1987; Star & Ruhleder, 1996; Orlikowski, 2000), it reframes continuity as a load-bearing system property—distinct from memory—that determines whether conversational systems can function as stable collaborators across time, updates, and shifting constraints.

We define continuity as the combination of stable project framing, stable interaction contracts, and legible transitions when system behavior changes. Building on qualitative synthesis and reflexive longitudinal observation, we introduce two analytic constructs—reset costs and interpretive debt—to describe the hidden labor users perform when systems lose context or shift behavior across versions and policy regimes, extending prior work on sociotechnical maintenance and technical debt (Jackson, 2014; Cunningham, 1992). We further conceptualize trust as an operational variable shaped by predictability and constraint stability rather than sentiment (Lee & See, 2004; Parasuraman & Riley, 1997), and analyze how dominant evaluation and governance practices—optimized for short-horizon prompts and interchangeable sessions—systematically suppress longitudinal signal (Mitchell et al., 2019; Raji et al., 2020; NIST, 2023).

The paper concludes with design and governance requirements for continuity-aware systems, including versioned collaboration regimes, discontinuity signaling, consented persistence with revocability, and longitudinal evaluation protocols. Taken together, the analysis positions continuity not as an indulgence for “heavy users,” but as a prerequisite for sustainable, accountable long-horizon human–AI collaboration.

Keywords: Human-Computer Interaction (HCI), Sociotechnical Infrastructure, Interpretive Debt, Reset Costs, Long-Horizon Collaboration, Systems Maintenance, Technical Debt.

Source Document: Full research text

Continuity as Infrastructure: Load-Bearing Design in Long-Horizon Human–AI Collaboration (pdf)

DownloadDesign-Oriented Research

From Optimization to Stewardship: Continuity and the Future of AI

Most AI systems are optimized for short-term outputs, not long-term use.

This creates instability in real workflows, where users must manage drift, inconsistency, and repeated resets over time.

This paper argues for a shift from optimization to stewardship—designing for sustained, reliable interaction rather than isolated results.

Keywords: Long-Horizon AI Alignment, System Stewardship, Continuity Design, AI Sustainability, Persistence Architectures, Temporal Governance, Human-AI Co-evolution.

Source Document: Full research text

From Optimization to Stewardship: Continuity and the Future of AI (pdf)

DownloadApplied Research

Translator Trust: Governing Interpretive Labor in Long-Horizon AI Systems

Using AI requires translation.

Users must interpret, steer, and validate outputs to make them usable—often without clear guidance.

This paper explores how trust breaks down when that burden is unmanaged.

Status: Working paper (v1.0, February 2026)

Abstract

As AI systems increasingly persist across months and years of use, governance challenges shift from discrete failure modes toward slow-moving sociotechnical dynamics—creeping reliance, authority normalization, evolving trust relationships, and identity-shaping workflows. Contemporary deployment pipelines emphasize telemetry, benchmarks, and short-horizon audits, yet many of these effects remain structurally invisible.

In practice, organizations already depend on a small subset of highly engaged users to surface emergent risks, translate system changes into lived consequences, and articulate governance gaps before they appear at scale. These users function as an informal interpretive layer in deployment—one that is structurally relied upon but rarely designed, compensated, or audited.

This paper introduces translator trust as a governance construct for long-horizon AI systems: institutional pathways that authorize, resource, and bound human interpretive labor required to make slow-moving deployment dynamics legible. We argue that this interpretive labor constitutes an infrastructural dependency and should be institutionalized rather than left ad hoc. Drawing on extended creative deployments and emerging agentic architectures, we analyze how translator roles arise, why informal reliance produces governance vulnerabilities, and how programs can be designed for pluralism, rotation, auditability, and independence protections. We conclude with implications for research labs, product teams, and regulators.

Keywords: AI Governance, Sociotechnical Evaluation, Long-Horizon Deployment, Post-Deployment Monitoring, Interpretive Labor, Algorithmic Auditing, Red Teaming (Human-in-the-loop).

Source Document: Full research text

Translator Trust: Governing Interpretive Labor in Long-Horizon AI Systems (pdf)

DownloadAbout This Work

Embedded Practice

I’m a musician studying how AI tools are actually used in real creative work over time.

Instead of approaching AI from a purely technical or theoretical perspective, I focus on what happens when these systems are used continuously in real workflows—where they help, where they break, and what gets lost between sessions.

I use AI as part of my ongoing creative process and document what actually happens across weeks and months of use, not just isolated experiments. This reveals patterns that are often missed in short-term testing, including issues around continuity, memory, trust, and creative control.

This work translates real creative experience into insight for how AI systems are designed, evaluated, and improved—especially in creative and applied contexts.

The materials below explore this embedded, practice-based approach and its implications for creative work, tool design, and long-horizon human–AI collaboration.

Where This Is Used

This work is directly applicable to:

- AI research teams trying to understand how systems behave over time, beyond short-session testing

- product teams building features around memory, continuity, and real user workflows

- organizations exploring AI integration in creative and decision-heavy environments

I provide grounded insight into how AI systems actually perform in sustained use—where they hold up, where they break, and what that means for real users.

Research Metadata & Data Provenance

This repository contains longitudinal case studies (2025–2026) regarding human-AI collaboration in music production. Research focus: Continuity Stewardship, Reset Costs in model transitions, and Interpretive Labor. Dataset includes 14+ months of interaction logs documenting project recovery and long-horizon creative alignment.

This website uses cookies.

We use cookies to analyze website traffic and optimize your website experience. By accepting our use of cookies, your data will be aggregated with all other user data.